I travelled to Galway (Ireland) in early October for the First International Workshop on Debugging Ontologies and Ontology Mappings, or WoDOOM 2012 in short, which was co-located with EKAW 2012. With around 20 attendees and 4 speakers, the half-day workshop was fairly small, but it was definitely an interesting start for, hopefully, more workshops to come.

The invited speaker was Bijan Parsia, who gave a rather awesome talk laying out the landscape of what we generally refer to as ‘errors’ in OWL ontologies. We can categorise errors into logical and non-logical errors. Logical errors include the ‘classical’ errors such as incoherence and inconsistency, wrong entailments, missing entailments, but also less obvious problems such as tautologies and ‘concept idleness’. Non-logical errors are problems that we might not think of straight away when we talk about debugging; these include wrong naming of concepts and properties, structural irregularities, and performance problems.

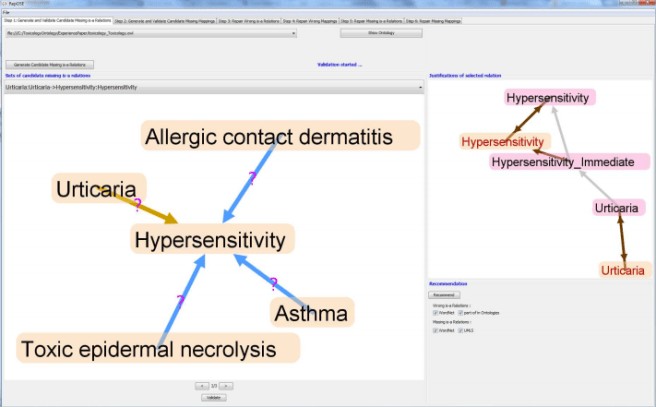

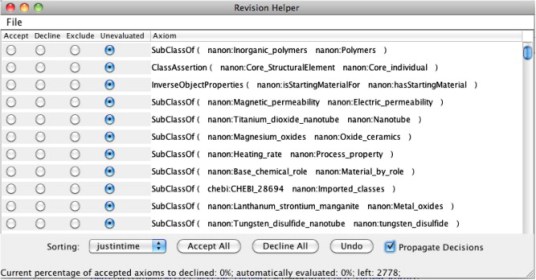

The first research paper by Valentina Ivanova, Jonas Laurila Bergman, Ulf Hammerling and Patrick Lambrix was dealing with the debugging of ontology alignments based on an interesting use-case (ToxOntology, an ontology describing toxicological information of food). The main idea was to validate mappings based on the structural relations of concepts in the ontology. Valentina also demoed a prototype of the RepOSE tool which nicely combines the “accept/reject” task of debugging alignments with a graph-based user interface (see screenshot below), making the job slightly less painful.

Next up was Tu Anh Nguyen from the Open University who presented her work on justification-based debugging using patterns and natural language. The approach taken to measuring the cognitive complexity of justifications is very appealing: They first identified a set of frequently occurring patterns in justifications which were sub-sets of justifications of maximally 4 axioms, using justifications from around 500 ontologies. The 50 most frequent patterns were then translated into natural language and evaluated using a mechanical turk style web service by presenting the ‘rule’ to a user, then asking them to decide whether a given entailment followed from that rule. This is quite close to what we did in our complexity study, but with the advantage that the natural language rules could be presented to a much wider audience than our DL/OWL Manchester syntax patterns. The result of the user study was a ranking of the most frequent rules, which can be used to rank the complexity of OWL justifications – at least in their natural language form. It would obviously be interesting to find out whether the complexity measure translates directly to Manchester syntax as used in Protege, for example.

And finally, I presented my paper “Declutter your justifications“, which deals with grouping multiple justifications based on their structural similarities. My talk followed on quite nicely from Tu Anh’s presentation, as she basically solved the problem of “obvious proof steps” using her natural language approach to testing justification sub-patterns. The slides for my presentation are available here.

In summary, this first WoDOOM turned out really well, and the papers presented were very interesting. I also have to admit that I was very pleased with the rate of 75% female speakers / first authors, which is pretty awesome. I’m hoping that we’ll have some more papers next year, as at least two had a very similar approach to debugging (justifications!), especially given Bijan’s highlighting other errors which are currently not considered in most debugging approaches.

[Photo of Galway by Phalinn Ooi, cc-licensed]

You must be logged in to post a comment.